Data Mining:Introduction and Classificatio

Introduction

Recent years have witnessed an explosive growth in the amounts of data collected, stored, and disseminated by various organizations. Examples include (1) the large volumes of point- of-sale data amassed at the checkout counters of grocery stores, (2) the continuous streams of satellite images produced by Earth-observing satellites, and (3) the avalanche of data logged by network monitoring software. To illustrate how much the quantity of data has grown over the years, Figure 61.1 shows an example of the number of Web pages indexed by a popular Internet search engine since 1998.

In each of the domains described above, data is collected to satisfy the information needs of the various organizations: Commercial enterprises analyze point-of-sale data to learn the purchase behavior of their customers; Earth scientists use satellite image data to advance their understanding of how the Earth system is changing in response to natural and human- related factors; and system administrators employ network traffic data to detect potential network problems, including those resulting from cyber-attacks.

One immediate difficulty encountered in these domains is how to extract useful informa- tion from massive data sets. Indeed, getting information out of the data is like drinking from a fire hose. The sheer size of the data simply overwhelms our ability to manually sift through the data, hoping to find useful information. Fueled by the need to rapidly analyze and sum- marize the data, researchers have turned to data mining techniques [22, 27, 29, 30, 50]. In a nutshell, data mining is the task of discovering interesting knowledge automatically from large data repositories.

Interesting knowledge has different meanings to different people. From a business perspec- tive, knowledge is interesting if it can be used by analysts or managers to make profitable

business decisions. For Earth Scientists, knowledge is interesting if it reveals previously unknown information about the characteristics of the Earth system. For system adminis- trators, knowledge is interesting if it indicates unauthorized or illegitimate use of system resources.

Data mining is often considered to be an integral part of another process, called Knowl- edge Discovery in Databases (or KDD). KDD refers to the overall process of turning raw data into interesting knowledge and consists of a series of transformation steps, including data preprocessing, data mining, and postprocessing. The objective of data preprocessing is to convert data into the right format for subsequent analysis by selecting the appropriate data segments and extracting attributes that are relevant to the data mining task (feature selection and construction). For many practical applications, more than half of the knowledge discovery efforts are devoted to data preprocessing. Postprocessing includes all additional operations performed to make the data mining results more accessible and easier to interpret. For example, the results can be sorted or filtered according to various measures to remove uninteresting patterns. In addition, visualization techniques can be applied to help analysts explore data mining results.

Data Mining Tasks and Techniques

Data mining tasks are often divided into two major categories:

Predictive The goal of predictive tasks is to use the values of some variables to predict the values of other variables. For example, in Web mining, e-tailers are interested in predicting which online users will make a purchase at their Web site. Other examples include biologists, who would like to predict the functions of proteins, and stock market analysts, who would like to forecast the future prices of various stocks.

Descriptive The goal of descriptive tasks is to find human-interpretable patterns that describe the underlying relationships in the data. For example, Earth Scientists are interested in discovering the primary forcings influencing observed climate patterns. In network intrusion detection, analysts want to know the kinds of cyber-attacks being launched against their networks. In document analysis, it is useful to find groups of documents, where the documents in each group share a common topic.

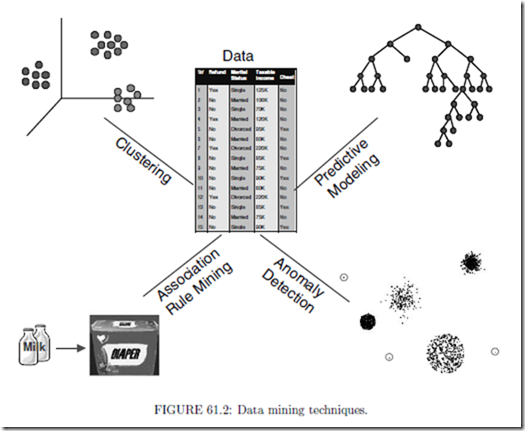

Data mining tasks can be accomplished using a variety of data mining techniques, as shown in Figure 61.2.

• Predictive modeling is used primarily for predictive data mining tasks. The input data for predictive modeling consists of two distinct types of variables: (1) explanatory variables, which define the essential properties of the data, and (2) one or more target variables, whose values are to be predicted. For the Web mining example given in the previous section, the input variables correspond to the demographic features of online users, such as age, gender, and salary, along with their browsing activities, e.g., what pages are accessed and for how long. There is one binary target variable, Buy, which has values, Yes or No, indicating, respectively, whether the user will buy anything from the Web site or not. Predictive modeling techniques can be further divided into two categories: classification and regression. Classification techniques are used to predict the values of discrete target variables, such as the Buy variable for online users at a Web site. For example, they can be used to predict whether a customer will most likely be lost to a competitor, i.e., customer churn or attrition, and to determine the category of a star or galaxy for sky survey cataloging. Regression techniques are used to predict the values of continuous target variables, e.g., they can be applied to forecast the future price of a stock.

• Association rule mining seeks to produce a set of dependence rules that predict the occurrence of a variable given the occurrences of other variables. For example, association analysis can be used to identify products that are often purchased together by sufficiently many customers, a task that is also known as market basket analysis. Furthermore, given a database that records a sequence of events, e.g., a sequence of successive purchases by customers, an important task is that of finding dependence rules that capture the temporal connections of events. This task is known as sequential pattern analysis.

• Cluster analysis finds groupings of data points so that data points that belong to one cluster are more similar to each other than to data points belonging to a different cluster, e.g., clustering can be used to perform market segmentation of customers, document categorization, or land segmentation according to vegetation cover. While cluster analysis is often used to better understand or describe the data, it is also useful for summarizing a large data set. In this case, the objects belonging to a single cluster are replaced by a single representative object, and further data analysis is then performed using this reduced set of representative objects.

• Anomaly detection identifies data points that are significantly different than the rest of the points in the data set. Thus, anomaly detection techniques have been used to detect network intrusions and to predict fraudulent credit card transactions. Some approaches to anomaly detection are statistically based, while other are based on distance or graph-theoretic notions.

Challenges of Data Mining

There are several important challenges in applying data mining techniques to large data sets:

Scalability Scalable techniques are needed to handle the massive size of some of the datasets that are now being created. As an example, such datasets typically require the use of efficient methods for storing, indexing, and retrieving data from secondary or even tertiary storage systems. Furthermore, parallel or distributed computing approaches are often necessary if the desired data mining task is to be performed in a timely manner. While such techniques can dramatically increase the size of the datasets that can be handled, they often require the design of new algorithms and data structures.

Dimensionality In some application domains, the number of dimensions (or at- tributes of a record) can be very large, which makes the data difficult to analyze because of the ‘curse of dimensionality’ [9]. For example, in bioinformatics, the development of advanced microarray technologies allows us to analyze gene expression data with thousands of attributes. The dimensionality of a data mining problem may also increase substantially due to the temporal, spatial, and sequential nature of the data.

Complex Data Traditional statistical methods often deal with simple data types such as continuous and categorical attributes. However, in recent years, more compli- cated types of structured and semi-structured data have become more important. One example of such data is graph-based data representing the linkages of web pages, social networks, or chemical structures. Another example is the free-form text that is found on most web pages. Traditional data analysis techniques often need to be modified to handle the complex nature of such data.

Data Quality Many data sets have one or more problems with data quality, e.g., some values may be erroneous or inexact, or there may be missing values. As a result, even if a ‘perfect’ data mining algorithm is used to analyze the data, the information discovered may still be incorrect. Hence, there is a need for data mining techniques that can perform well when the data quality is less than perfect.

Data Ownership and Distribution For a variety of reasons, e.g., privacy and own- ership, some collections of data are distributed across a number of sites. In many such cases, the data cannot be centralized, and thus, the choice is either distributed data mining or no data mining. Challenges involved in developing distributed data mining solutions include the need for efficient algorithms to cope with distributed and heterogeneous data sets, the need to minimize the cost of communication, and the need to accommodate data security and data ownership policies.

Data Mining and the Role of Data Structures and Algorithms

Research in data mining is motivated by a number of factors. In some cases, the goal is to develop an approach with greater efficiency. For example, a current technique may work well as long as all of the data can be held in main memory, but the size of data sets has grown to the point where this is no longer possible. In other cases, the goal may be to develop an approach that is more flexible. For instance, the nature of the data may be continually changing, and it may be necessary to develop a model of the data that can also change. As an example, network traffic varies in volume and kind, often over relatively short time periods. In yet other cases, the task is to obtain a more accurate model of the data, i.e., one that takes into account additional factors that are common in many real world situations.

The development and success of new data mining techniques is heavily dependent on the creation of the proper algorithms and data structures to address the needs such as those just described: efficiency, flexibility, and more accurate models. (This is not to say that system or applications issues are unimportant.) Sometimes, currently existing data structures and algorithms can be directly applied, e.g., data access methods can be used to efficiently organize and retrieve data. However, since currently existing data structures and algorithms were typically not designed with data mining tasks in mind, it is frequently the case that some modifications, enhancements, or completely new approaches are needed, i.e., new work in data structures and algorithms is needed. We would emphasize, though, that sometimes it is the concepts and viewpoints associated with currently existing algorithms and data structures that are the most useful. Thus, the realization that a problem can be formulated as a particular type of a graph or tree may quickly lead to a solution.

In the following sections, we provide some examples of how data structures play an important role, both conceptually and practically, for classification, association analysis, and clustering.

Classification

Classification [21, 43] is the task of assigning objects to their respective categories. For example, stock analysts are interested in classifying the stocks of publicly-owned companies as buy, hold, or sell, based on the financial outlook of these companies. Stocks classified as buy are expected to have stronger future revenue growth than those classified as sell. In addition to the practical uses of classification, it also helps us to understand the similarities and differences between objects that belong to different categories.

The data set in a classification problem typically consists of a collection of records or data objects. Each record, also known as an instance or example, is characterized by a tuple (x, y), where x is the set of explanatory variables associated with the object and y is the object’s class label. A record is said to be labeled if the value of y is known; otherwise, the record is unlabeled. Each attribute xk ∈ x can be discrete or continuous. On the other hand, the class label y must be a discrete variable whose value is chosen from a finite set {y1, y2, ··· yc}. If y is a continuous variable, then this problem is known as regression.

The classification problem can be stated formally as follows:

Classification is the task of learning a function, f : x → y, that maps the explanatory variables x of an object to one of the class labels for y.

f is known as the target function or classification model.

Nearest-Neighbor Classifiers

Typically, the classification framework presented involves a two-step process: (1) an inductive step for constructing classification models from data, and (2) a deductive step for applying the derived model to previously unseen instances. For decision tree induction and rule-based learning systems, the models are constructed immediately after the training set is provided. Such techniques are known as eager learners because they intend to learn the model as soon as possible, once the training data is available.

An opposite strategy would be to delay the process of generalizing the training data until it is needed to classify the unseen instances. One way to do this is to find all training examples that are relatively similar to the attributes of the test instance. Such examples are known as the nearest neighbors of the test instance. The test instance can then be classified according to the class labels of its neighbors. This is the central idea behind the nearest-neighbor classification scheme [4, 17, 18, 21], which is useful for classifying data sets with continuous attributes. A nearest neighbor classifier represents each instance as a data point embedded in a d-dimensional space, where d is the number of continuous attributes. Given a test instance, we can compute its distance to the rest of the data objects (data points) in the training set by using an appropriate distance or similarity measure, e.g., the standard Euclidean distance measure.

The k-nearest neighbors of an instance z are defined as the data points having the k smallest distances to z.

Figure 61.3 illustrates an example of the 1-, 2- and 3-nearest neighbors of an unknown instance, ×, located at the center of the circle. The instance can be assigned to the class label of its nearest neighbors. If the nearest neighbors contain more

than one class label, then one takes a majority vote among the class labels of the nearest neighbors.

The nearest data point to the unknown instance shown in Figure 61.3(a) has a negative class label. Thus, in a 1-nearest neighbor classification scheme, the unknown instance would be assigned to a negative class. If we consider a larger number of nearest neighbors, such as three, the list of nearest neighbors would contain training examples from 2 positive classes and 1 negative class. Using the majority voting scheme, the instance would be classified as a positive class. If the number of instances from both classes are the same, as in the case of the 2-nearest neighbor classification scheme shown in Figure 61.3(b), we could choose either one of the classes (or the default class) as the class label.

A summary of the k-nearest neighbor classification algorithm is given in Figure 61.4.

Given an unlabeled instance, we need to determine its distance or similarity to all the training instances. This operation can be quite expensive and may require efficient indexing techniques to reduce the amount of computation.

(k: number of nearest neighbor, E: training instances, z: unlabeled instance)

1: Compute the distance or similarity of z to all the training instances

2: Let Et ⊂ E be the set of k closest training instances to z

3: Return the predicted class label for z: class ← V oting(Et).

FIGURE 61.4: k−nearest neighbor classification algorithm.

While one can take a majority vote of the nearest neighbors to select the most likely class label, this approach may not be desirable because it assumes that the influence of each nearest neighbor is the same. An alternative approach is to weight the influence of each nearest neighbor according to its distance, so that the influence is weaker if the distance is too large.

Proximity Graphs for Enhancing Nearest Neighbor Classifiers

The nearest neighbor classification scheme, while simple, has a serious problem as currently presented: It is necessary to store all of the data points, and to compute the distance between an object to be classified and all of these stored objects. If the set of original data points is large, then this can be a significant computational burden. Hence, a considerable amount of research has been conducted into strategies to alleviate this problem.

There are two general strategies for addressing the problem just discussed:

Condensing The idea is that we can often eliminate many of the data points with- out affecting classification performance, or without affecting it very much. For instance, if a data object is in the ‘middle’ of a group of other objects with the same class, then its elimination will likely have no effect on the nearest neighbor classifier.

Editing Often, the classification performance of a nearest neighbor classifier can be enhanced by deleting certain data points. More specifically, if a given object is compared to its nearest neighbors and most of them are of another class (i.e., if the points that would be used to classify the given point are of another class), then deleting the given object will often improve classifier performance.

While various approaches to condensing and editing points to improve the performance of nearest neighbor classifiers have been proposed, there has been a considerable amount of work that approaches this problem from the viewpoint of computational geometry, especially proximity graphs [49]. Proximity graphs include nearest neighbor graphs, minimum spanning trees, relative neighborhood graphs, Gabriel graphs, and the Delaunay triangulation [35]. We can only indicate briefly the usefulness of this approach, and refer the reader to [49] for an in depth discussion.

First, we consider how Voronoi diagrams can be used to eliminate points that add nothing to the classification. (The Voronoi diagram for a set of data points is the set of polygons formed by partitioning all of points in the space into a set of convex regions such that every point in a region is closer to the data point in the same region than to any other data point. Figure 61.5 shows a Voronoi diagram for a number of two-dimensional points.) Specifically, if all the Voronoi neighbors of a point, i.e., those points belonging to Voronoi regions that touch the Voronoi region of the given point, have the same class as the given data point, then discarding that point cannot affect the classification performance. The reason for this is that the Voronoi regions of the neighboring points will expand to ‘occupy’ the space once occupied by the the Voronoi region of the given point, and thus, classification behavior is unchanged. More sophisticated approaches based on proximity graphs are possible [49].

For editing, i.e., discarding points to approve classification performance, proximity graphs can also be useful. In particular, instead of eliminating data points whose k nearest neigh- bors are of a different class, we build a proximity graph and eliminate those points where a majority of the neighbors in the proximity graph are of a different class. Of course, the results will depend on the type of proximity graph. The Gabriel graph has been found to be the best, but for further discussion, we once again refer the reader to [49], and the extensive list of references that it contains.

In summary, our goal in this section was to illustrate that—for one particular classification scheme, nearest neighbor classification—the rich set of data structures and algorithms of computational geometry, i.e., proximity graphs, have made a significant contribution, both practically and theoretically.

Comments

Post a Comment