Data Structures for Databases:Data Structures for Query Processing

Data Structures for Query Processing

Query evaluation is performed in main memory in several steps as outlined in Figure 60.2. Starting with the high-level input query expressed in a declarative language called SQL (see, for example, [2]) the Parser scans, parses, and validates the query.

The goal is to check

whether the query is formulated according to the syntax rules of the language supported in the DBMS. The parser also validates that all attribute and relation names are part of the database schema that is being queried.

The parser produces a parse tree which serves as input to the Query Translation and Rewrite module shown underneath the parser. Here the query is translated into an internal representation, which is based on the relational algebra notation [1]. Besides its compact form, a major advantage of using relational algebra is that there exist transformations (re- write rules) between equivalent expressions to explore alternate, more efficient forms of the same query. Different algebraic expressions for a query are called logical query plans and are represented as expression trees or operator trees. Using the re-write rules, the initial logical query plan is transformed into an equivalent plan that is expected to execute faster. Query re-writing is guided by heuristics which help reduce the amount of intermediary work that must be performed by the query in order to arrive at the same result.

A particularly challenging problem is the selection of the best join ordering for queries involving the join of three or more relations. The reason is that the order in which the input relations are presented to a join operator (or any other binary operator for that matter) tends to have an important impact on the cost of the operation. Unfortunately, the number of candidate plans grows rapidly when the number of input relations grows.

The outcome of the query translation and rewrite module is a set of “improved” logical query plans representing different execution orders or combinations of operators of the original query. The Physical Plan Generator converts the logical query plans into physical query plans which contain information about the algorithms to be used in computing the relational operators represented in the plan. In addition, physical query plans also contain information about the access methods available for each relation. Access methods are ways of retrieving tuples from a table and consist of either a file scan (i.e., a complete retrieval of all tuples) or an index plus a matching selection condition. Given the many different

options for implementing relational operators and for accessing the data, each logical plan may lead to a large number of possible physical plans. Among the many possible plans, the physical plan generator evaluates the cost for each and chooses the one with the lowest overall cost.

Finally, the best physical plan is submitted to the Code Generator which produces the executable code that is either executed directly or is stored and executed later whenever needed. Query re-writing and physical plan generation are referred to as query optimization. However, the term is misleading since in most cases the chosen plan represents a reasonably efficient strategy for executing a query.

In the following paragraphs, we focus on several important data structures that are used during query evaluation, some of which have been mentioned above: The parse tree for storing the parsed and validated input query (Section 60.2.3), the expression tree for representing logical and physical query plans (Section 60.2.4), and the histogram which is used to approximate the distribution of attribute values in the input relations (Section 60.2.5). We start with a summary of the well-known index structures and how they are used to speed up database operations. Since sorting plays an important role in query processing, we include a separate description of the data structures used to sort large data sets using external memory (Section 60.2.2).

Index Structures

An important part of the work of the physical plan generator is to choose an efficient implementation for each of the operators in the query. For each relational operator (e.g., selection, projection, join) there are several alternative algorithms available for implementation. The best choice usually depends on factors such as size of the relation, available memory in the buffer pool, sort order of the input data, and availability of index structures. In the following, we briefly highlight some of the important index structures that are used by a modern DBMS.

One-dimensional Indexes

One-dimensional indexes contain a single search key, which may be composed of multiple attributes. The most frequently used data structures for one-dimensional database indexes are dynamic tree-structured indexes such as B/B+-Trees (from now on collectively referred to as B-Trees, see also Chapter 15) and hash-based indexes using extendible and linear hashing (see Chapter 9).

In general, hash-based indexes are especially good for equality searches. For example, in the case of an equality selection operation, one can use a one- dimensional hash-based index structure to examine just the tuples that satisfy the given condition. Consider the selection of student records having a certain grade point average (GPA). Assuming students are randomly distributed throughout the relation, an index on the GPA value could lead us to only those records satisfying the selection condition and resulting in a lot fewer data transfers than a sequential scan of the relation (if we assume the tuples satisfying the condition make up only a fraction of the entire relation).

Given their superior performance for equality searches hash-based indexes prove to be particularly useful in implementing relational operations such as joins. For example, the index-nested-loop join algorithm generates many equality selection queries, making the difference in cost between a hash-based and the slightly more expensive tree-based implementation significant.

B-Trees provide efficient support for range searches (all data items that fall within a range of values) and are almost as good as hash-based indexes for equality searches. Besides their excellent performance, B-Trees are “self-tuning”, meaning they maintain as many levels of the index as is appropriate for the size of the relation being indexed. Unlike hash-based indexes, B-Trees manage the space on the disk blocks they use and do not require any overflow blocks. As we have mentioned in Sec. 60.1, database index structures are an example of data structures that have been designed as secondary memory structures.

Multi-dimensional Indexes

In addition to these one-dimensional index structures, many applications (e.g., geographic database, inventory and sales database for decision-support) also require data structures capable of indexing data existing in two or higher-dimensional spaces. In these domains, important database operations are selections involving partial matches (all points that match specified values in one or more dimensions), range queries (all points that fall within a range of values in one or more dimensions), nearest-neighbor queries (closest point to a given point), and so-called “where-am-I” queries (all the regions in which a given point is located).

The following are some of the most important data structures that support these types of operations.

Grid file. A multi-dimensional extension of one-dimensional hash tables. Grid files support range queries, partial-match queries, and nearest-neighbor queries well, as long as data is uniformly distributed.

Multiple-key index. The index on one attribute leads to indexes on another at- tribute for each value of the first. Multiple-key indexes are useful for range and nearest-neighbor queries.

R-tree. A B-Tree generalization suitable for collections of regions. R-Trees are used to represent a collection of regions by grouping them into a hierarchy of larger regions. They are well suited to support “where-am-I” queries as well as the other types of queries mentioned above if the atomic regions are individual points. (See also Chapter 21.)

Quad tree. Recursively divide a multi-dimensional data set into quadrants until each quadrant contains a minimal number of points (e.g., amount of data that can fit on a disk block). Quad trees support partial-match, range, and nearest-neighbor queries well. (See also Chapter 19/)

Bitmap index. A collection of bit vectors which encode the location of records with a given value in a given field. Bitmap indexes support range, nearest-neighbor, and partial-match queries and are often employed in data warehouses and decision- support systems. Since bitmap indexes tend to get large when the underlying attributes have many values, they are often compressed using a run-length en- coding.

Given the importance of database support for non-standard applications, many relational database management systems support one or more of these multi-dimensional indexes, either directly (e.g., bitmap indexes), or as part of a special-purpose extension to the core database engine (e.g., R-trees in a spatial extender).

In general, indexes are also used to answer certain types of queries without having to access the data file. For example, if we need only a few attribute values from each tuple and there is an index whose search key contains all these fields, we can choose an index scan instead of examining all data tuples. This is faster since index records are smaller (and hence fit into fewer buffer pages). Note that an index scan does not make use of the search structure of the index: for example, in a B-Tree index one would examine all leaf pages in sequence. All commercial relational database management systems support B-Trees and at least one type of hash-based index structure.

Sorting Large Data Sets

The need to sort large data sets arises frequently in data management. Besides outputting the result of a query in sorted order, sorting is useful for eliminating duplicate data items during the processing of queries. In addition, an efficient algorithm for performing a join operation (sort-merge join) requires the input relations to be sorted. Since the size of databases routinely exceeds the amount of available main memory, most DBMSs use an external sorting technique called merge sort, which is based on the main-memory version with the same name. The idea behind merge sort is that a file which does not fit into main memory can be sorted by breaking it into smaller pieces (runs), sorting the smaller runs individually, and then merging them to produce a single run that contains the original data items in sorted order. External merge sort is another example where main memory versions of algorithms and data structures need to be changed to accommodate a computing environment where all data resides on secondary and perhaps even tertiary storage. We will point out more such examples in Section 60.4 when we describe the disk space manager.

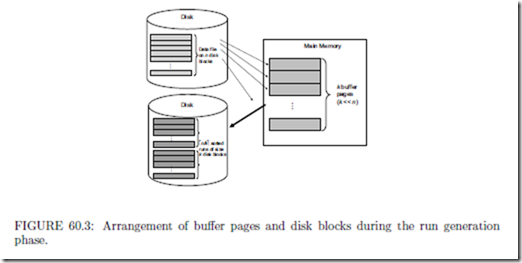

During the first phase, also called the run-generation phase, merge-sort fills the available buffer pages in main memory with blocks containing the data records from a file stored on disk. We will have more to say about the management of buffer pages when we discuss data structures for buffer management in Section 60.3. Sorting is done using any of the main-memory algorithms (e.g., Heapsort, Quicksort). The sorted records are written back to new blocks on disk, forming a sorted run containing as many blocks as there are available buffer pages in main memory. This process is repeated until all records in the data file are in one of the sorted runs. The arrangement of buffer pages and disk blocks during run generation is depicted in Figure 60.3.

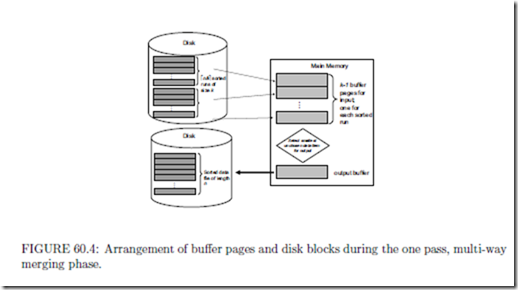

In the second phase, also called the merging phase, all but one of the main memory buffers are used to hold input data from one of the sorted runs. In most instances, the number

of sorted runs is less than the number of buffer pages and the merging can be done in one pass. Note, this so-called multi-way merging is different from the main-memory version of merge sort which merges pairs of runs (two-way merge). The arrangement of buffers and disk blocks to complete this one-pass multi-way merging step is shown in Figure 60.4. Note that the two-way merge strategy results in reading data in and out of memory 2 ∗ log2(n) times for n runs (versus reading all n runs only once for the n-way strategy).

In situations when the number of sorted runs exceeds the available buffer pages in main memory, the merging step must be performed in several passes as follows: assuming k buffer pages in main memory, each pass involves the repeated merging of k − 1 runs until all runs have been merged. At this point the number of runs has been reduced by a factor of k − 1.

If the reduced number of sublists is still greater than k, the merging is repeated until the number of sublists is less than k. A final merge generates the sorted output. In this scenario, the number of merge passes required is plogk−1(n/k)l.

A parse tree is an m-ary tree data structure that represents the structure of a query. Each interior node of the tree is labeled with a non-terminal symbol from the grammar of the query language. The root node is labeled with the goal symbol. The query being parsed appears at the bottom with each token of the query being a leaf in the tree. In the case of SQL, leaf nodes are lexical elements such as keywords of the language (e.g., SELECT ), names of attributes or relations, operators, and other schema elements.

The parse tree for the SQL query selecting all the enrolled students with a GPA higher than 3.5.

is shown in Figure 60.5. For this example, we are tacitly assuming the existence of two relations called Enrollment and Student which store information about enrollment records for students in a school or university.

The parse tree shown in Figure 60.5 is based on the grammar for SQL as defined in [4] (which is a subset of the full grammar for SQL). Non-terminal symbols are enclosed in angled brackets. At the root is the category < Query > which forms the goal for the parsed query. Descending down the tree, we see that this query is of the form SF W (select-from- where). In case one of the relations in the F ROM clause is a view, it must be replaced by its own parse tree since a view is essentially a query. A parse tree is said to be valid if it conforms to the syntactic rules of the grammar as well as the semantic rules on the use of the schema names.

An expression tree is a binary tree data structure that represents a logical query plan for a query after it has been translated into a relational algebra expression. The expression tree represents the input relations of the query as leaf nodes of the tree, and the relational algebra operations together with estimates for result sizes as internal nodes.

Figure 60.6 shows an example of three expression trees representing different logical query plans for the following SQL query, which selects all the students enrolled in the course ’COP 4720’ during the term ’Sp04’ who have a grade point average of 3.5 (result sizes are omitted in the figure):

SELECT Name FROM Enrollment, Student

WHERE Enrollment.ID = Student.SID AND Enrollment.Course = ’COP 4720’ AND Enrollment.TermCode = ’Sp04’ AND Student.GPA = 3.5;

An execution of a tree consists of executing an internal node operation whenever its operands are available and then replacing that internal node by the relation that results from executing the operation. The execution terminates when the root node is executed and produces the result relation for the query.

Note that expression trees representing physical query plans differ in the information that is stored in the nodes. For example, internal nodes contain information such as the operation being performed, any parameters if necessary, general strategy about the algorithm that is used, whether materialization of intermediate results or pipelining is used, and the anticipated number of buffers the operation will require (rather than result size as in logical query plans). At the leaf nodes table names are replaced by scan operators such as TableScan, SortScan, IndexScan, etc.

There is an interesting twist to the types of expression trees that are actually considered by the query optimizer. As we have previously pointed out, the number of different query plans (both logical and physical) for a given query can be very large. This is even more so the case, when the query involves the join of two or more relations since we need to take the join order into account when choosing the best possible plan. Today’s query optimizers prune a large portion of the candidate expression trees and concentrate only on the class of left-deep trees. A left-deep tree is an expression tree in which the right child of each join is a leaf (i.e., a base table). For example, in Figure 60.6, the tree labeled (a) is an example of a left-deep tree. The tree labeled (b) is an example of a nonlinear or bushy tree (a join node may have no leaf nodes), tree (c) is an example of a right-deep tree (the left child of each join node is a base table).

Besides the fact that the number of left-deep trees is smaller than the number of all trees, there is another advantage for considering only left-deep expression trees: Left-deep trees allow the query optimizer to generate more efficient plans by avoiding the intermediate storage (materialization) of join results. Note that in most join algorithms, the inner table must be materialized because we must examine the entire inner table for each tuple of the outer table. However, in a left-deep tree, all inner tables exist as base tables and are therefore already materialized. IBM DB2, Informix, Microsoft SQL Server, Oracle 8, and Sybase ASE all search for left-deep trees using a dynamic programming approach [7].

Whether choosing a logical query plan or constructing a physical query plan from a logical query plan, the query evaluation engine needs to have information about the expected cost of evaluating the expressions in a query. Cost estimation is based on statistics about the database which include number of tuples in a relation, number of disk blocks used, distribution of values for each attribute, etc. Frequent computation of statistics, especially in light of many changes to the database, lead to more accurate cost estimates. However, the drawback is increased overhead since counting tuples and values is expensive.

An important data structure for cost estimation is the histogram, which is used by the DBMS to approximate the distribution of values for a given attribute. Note that in all but the smallest databases, counting the exact occurrence of values is usually not an option. Having access to accurate distributions is essential in determining how many tuples satisfy a certain selection predicate, for example, how many students there are with a GPA value of

This is especially important in the case of joins, which are among the most expensive operations. For example, if a value of the join attribute appears in the histograms for both relations, we can determine exactly how many tuples of the result will have this value.

Using a histogram, the data distribution is approximated by dividing the range of values, for example, GPA values, into subranges or buckets. Each bucket contains the number of tuples in the relation with GPA values within that bucket. Histograms are more accurate than assuming a uniform distribution across all values.

Depending on how one divides the range of values into the buckets, we distinguish between equiwidth and equidepth histograms [7]. In equiwidth histograms, the value range is divided into buckets of equal size. In equidepth histograms, the value range is divided so that the number of tuples in each bucket is the same (usually within a small delta). In both cases, each bucket contains the average frequency. When the number of buckets gets large, histograms can be compressed, for example, by combining buckets with similar distributions. Consider the Students-Enrollments scenario from above. Figure 60.7 depicts two sample histograms for attribute GPA in relation Student. Values along the horizontal axis denote GPA, the vertical bars indicate the number of students that fall in each range. For this

example we are assuming that GPA values are rounded to one decimal and that there are 50 students total. Histogram a) is an equiwidth histogram with bucket size = 2. Histogram b) is an equidepth histogram containing between 7 and 10 students per bucket.

Consider the selection GP A = 3.5. Using the equidepth histogram, we are led to bucket 3, which contains only the GPA value 3.5 and we arrive at the correct answer, 10 (vs. 1/2 of 12 = 6 in the equiwidth histogram). In general, equidepth histograms provide better estimates than equiwidth histograms. This is due to the fact that buckets with very frequently occurring values contain fewer values. Thus the uniform distribution assumption is applied to a smaller range of values, leading to a more accurate estimate. The converse is true for buckets containing infrequent values, which are better approximated by equiwidth histograms. However, in query optimization, good estimation for frequent values are more important.

Histograms are used by the query optimizers of all of the major DBMS vendors. For example, Sybase ASE, IBM DB2, Informix, and Oracle all use one-dimensional, equidepth histograms. Microsoft’s SQL Server uses one-dimensional equiarea histograms (a combination of equiwidth and equidepth) [7].

Comments

Post a Comment